Storage Benchmark Kit

The Ultimate Performance Benchmarking Framework for Any Storage System

🚀 Why SBK?

For Storage Companies/Vendors:

- 🎯 Accurate Performance Insights: 100% accurate latency percentiles without sampling

- 📊 Comprehensive Analytics: Detailed throughput, latency, and performance metrics

- 🔧 Easy Integration: Simple driver interface to benchmark your storage system

- 🌐 Enterprise Ready: Docker, Kubernetes, and distributed benchmarking support

- 📈 Competitive Advantage: Showcase your storage system’s true performance potential

For Open Source Developers:

- 🛠️ Simple Driver Development: Clean API with comprehensive documentation

- 🤝 Active Community: Collaborate with storage experts and companies

- 📚 Learning Opportunity: Understand storage system performance characteristics

- 🏆 Showcase Skills: Contribute to a high-performance benchmarking framework

- 🔥 High Impact: Your contributions help companies make better storage decisions

Any Storage System… Any Payload… Any Time Stamp…

Cloud Deployable… Dockers… Kubernetes…

🎯 Key Features

📊 Unmatched Accuracy

- 100% Accurate Percentiles: No sampling - every operation is measured

- Comprehensive Metrics: 17+ latency percentiles (5th to 99.99th)

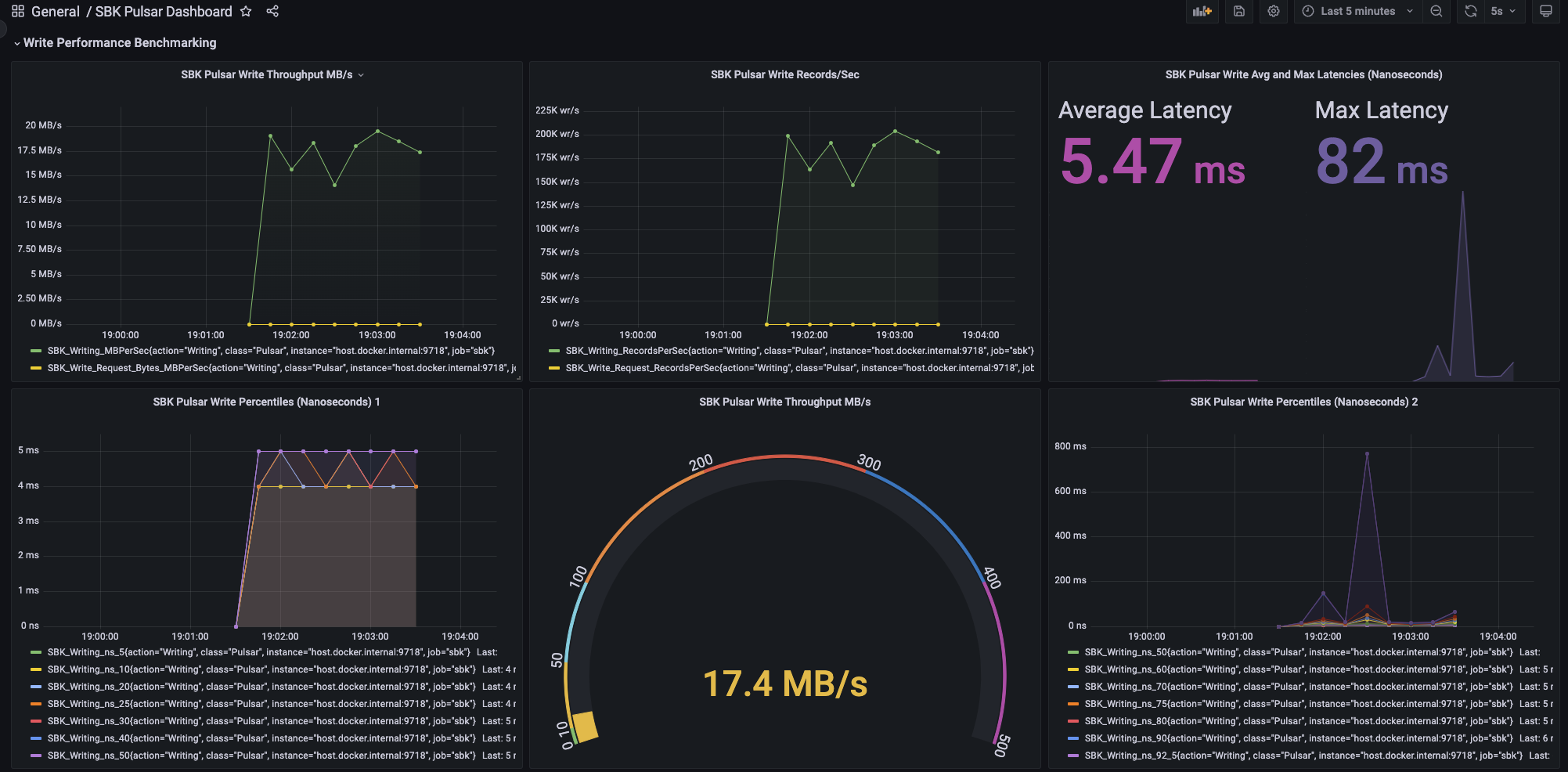

- Real-time Monitoring: Live performance data via Prometheus/Grafana

- Multi-threaded: Support for concurrent writers and readers

- AI-Powered Performance Analysis: Generate comprehensive Excel reports with AI-driven insights and comparative analysis using sbk-charts

🏗️ Universal Compatibility

- Any Storage System: Databases, message queues, file systems, cloud storage

- Any Data Type: Byte arrays, strings, custom payloads

- Any Time Unit: Milliseconds, microseconds, nanoseconds

- Flexible Deployment: Standalone, Docker, Kubernetes, distributed clusters

⚡ Extreme Performance

- High Throughput: Designed to push storage systems to their limits

- Low Overhead: Minimal impact on storage system performance

- Scalable Architecture: Benchmark from single nodes to large clusters

- Multiple Modes: Burst, throughput, rate-limited, and end-to-end latency testing

SBK can be deployed in distributed nodes using applications SBM, SBK-GEM and SBK-GEM-YAL. SBK can be executed on multiple nodes and performance results can be aggregated into one master node called SBM (Storage Benchmark Monitor).

The design principle of SBK is the Performance Benchmarking of ‘Any Storage System’ with ‘Any Type of data payload’ and ‘Any Time Stamp’, because, the SBK is not specific to particular type of storage system, it can be used for performance benchmarking of any storage system, let it be file system, databases , any distributed storage systems or message queues by adding SBK driver which specifies the IO operations of storage system. you can find the list of supported drivers below. The SBK supports a variety of payloads too, such as byte array, byte buffer, string, and you can add your own payload type. The Latency values can be measured either in milliseconds, microseconds or nanoseconds using SBK.

The SBK is built on PerL (Performance Logger). The

Watch the 10 minutes video on the SBK Overview

SBK supports performance benchmarking of following storage systems

| # | Driver | # | Driver |

|---|---|---|---|

| 1. | Activemq | 28. | Kafka |

| 2. | Artemis | 29. | Leveldb |

| 3. | Asyncfile | 30. | Linkedbq |

| 4. | Atomicq | 31. | Mariadb |

| 5. | Bookkeeper | 32. | Memcached |

| 6. | Cassandra | 33. | Minio |

| 7. | Cephs3 | 34. | Mongodb |

| 8. | Chromadb | 35. | Mssql |

| 9. | Concurrentq | 36. | Mysql |

| 10. | Conqueue | 37. | Nats |

| 11. | Couchbase | 38. | NatsStream |

| 12. | Couchdb | 39. | Nsq |

| 13. | Csv | 40. | Null |

| 14. | Db2 | 41. | Openio |

| 15. | Derby | 42. | Postgresql |

| 16. | Dynamodb | 43. | Pravega |

| 17. | Elasticsearch | 44. | Pulsar |

| 18. | Exasol | 45. | Rabbitmq |

| 19. | Fdbrecord | 46. | Redis |

| 20. | File | 47. | Redpanda |

| 21. | Filestream | 48. | Rocketmq |

| 22. | Foundationdb | 49. | Rocksdb |

| 23. | H2 | 50. | Seaweeds3 |

| 24. | Halodb | 51. | Sqlite |

| 25. | Hdfs | 52. | Solr |

| 26. | Hive | 53. | Syncq |

| 27. | Jdbc |

In the future, many more storage systems drivers will be plugged in

🌟 Why Contribute to SBK?

🏢 For Storage Companies

- Showcase Performance: Demonstrate your storage system’s superiority

- Customer Confidence: Provide transparent, verifiable performance metrics

- Competitive Edge: Stand out with comprehensive benchmarking support

- Community Engagement: Connect with potential customers and partners

- Marketing Leverage: Use SBK results in your marketing materials

👨💻 For Open Source Developers

- High-Impact Contributions: Your code helps companies make critical decisions

- Learning Opportunity: Deep understanding of storage system performance

- Portfolio Builder: Work on a project used by major companies

- Community Recognition: Get acknowledged for your expertise

- Skill Development: Work with high-performance Java, distributed systems

🎁 What You Get

- Comprehensive Documentation: Step-by-step driver development guide

- Template Support: Automated driver generation with Gradle commands

- Code Review: Expert feedback on your contributions

- Community Support: Active Slack channel and issue tracking

- Showcase Platform: Your driver featured in our driver ecosystem

🚀 Quick Start for Developers

Adding Your Storage Driver - It’s Easy!

- Generate you driver template

# Generate your driver template in seconds! ./gradlew addDriver -Pdriver="your-storage-name" - Implement the

Storageinterface (just 7 methods!) - Implement

WriterandReaderinterfaces - Add your driver to the build configuration

- You’re done! 🎉

📝 Simple Driver Example

public class MyStorage implements Storage<byte[]> {

// Just implement these 7 methods:

public void addArgs(ParameterOptions params) { /* add your params */ }

public void parseArgs(ParameterOptions params) { /* parse your params */ }

public void openStorage(ParameterOptions params) throws IOException { /* connect */ }

public void closeStorage(ParameterOptions params) throws IOException { /* cleanup */ }

public DataWriter<byte[]> createWriter(int id, ParameterOptions params) { /* writer */ }

public DataReader<byte[]> createReader(int id, ParameterOptions params) { /* reader */ }

public DataType<byte[]> getDataType() { return new ByteArray(); }

}

🎯 What You Get Out-of-the-Box

- Automatic Performance Metrics: Throughput, latency, percentiles

- Real-time Monitoring: Prometheus integration

- Grafana Dashboards: Beautiful visualizations

- Docker Support: Containerized benchmarking

- Distributed Testing: Multi-node benchmarking

- CSV Export: Detailed results for analysis

📚 Comprehensive Documentation

- Step-by-step tutorials for driver development

- API documentation with examples

- Best practices for performance testing

- Troubleshooting guides for common issues

We welcome open source developers to contribute to this project by adding a driver for your storage device and any features to SBK. Refer to:

- Contributing to SBK for the Contributing guidelines.

- Add your storage driver to SBK to know how to add your driver (storage device driver or client) for performance benchmarking in detail.

Build SBK

Prerequisites

- Java 25+

- Gradle 9.4+

Building

Checkout the source code:

git clone https://github.com/kmgowda/SBK.git

cd SBK

Build the SBK:

./gradlew build

untar the SBK to local folder

tar -xvf ./build/distributions/sbk-9.0.tar -C ./build/distributions/.

Running SBK locally:

<SBK directory>/./build/distributions/sbk-9.0/bin/sbk -help

...

usage: sbk -out SystemLogger

Storage Benchmark Kit

-class <arg> Storage Driver Class,

Available Drivers [Activemq, Artemis, AsyncFile,

Atomicq, BookKeeper, Cassandra, CephS3, ChromaDB,

ConcurrentQ, Conqueue, Couchbase, CouchDB, CSV,

Db2, Derby, Dynamodb, Elasticsearch, Exasol,

FdbRecord, File, FileStream, FoundationDB, H2,

HaloDB, HDFS, Hive, Jdbc, Kafka, LevelDB,

Linkedbq, MariaDB, Memcached, MinIO, MongoDB,

MsSql, MySQL, Nats, NatsStream, Nsq, Null, OpenIO,

PostgreSQL, Pravega, Pulsar, RabbitMQ, Redis,

RedPanda, RocketMQ, RocksDB, SeaweedS3, Solr,

SQLite, Syncq]

-help Help message

-maxlatency <arg> Maximum latency;

use '-time' for time unit; default:180000 ms

-millisecsleep <arg> Idle sleep in milliseconds; default: 0 ms

-minlatency <arg> Minimum latency;

use '-time' for time unit; default:0 ms

-out <arg> Logger Driver Class,

Available Drivers [CSVLogger, GrpcLogger,

PrometheusLogger, Sl4jLogger, SystemLogger]

-readers <arg> Number of readers

-records <arg> Number of records(events) if 'seconds' not

specified;

otherwise, Maximum records per second by

writer(s); and/or

Number of records per second by reader(s)

-ro <arg> Readonly Benchmarking,

Applicable only if both writers and readers are

set; default: false

-rq <arg> Benchmark Reade Requests; default: false

-rsec <arg> Number of seconds/step for readers, default: 0

-rstep <arg> Number of readers/step, default: 1

-seconds <arg> Number of seconds to run

if not specified, runs forever

-size <arg> Size of each message (event or record)

-sync <arg> Each Writer calls flush/sync after writing <arg>

number of of events(records); and/or

<arg> number of events(records) per Write or Read

Transaction

-thread <arg> Thread Type [p: platform, f: fork-join,

v:virtual], default: p

-throughput <arg> If > 0, throughput in MB/s

If 0, writes/reads 'records'

If -1, get the maximum throughput (default: -1)

-time <arg> Latency Time Unit [ms:MILLISECONDS,

mcs:MICROSECONDS, ns:NANOSECONDS]; default: ms

-wq <arg> Benchmark Write Requests; default: false

-writers <arg> Number of writers

-wsec <arg> Number of seconds/step for writers, default: 0

-wstep <arg> Number of writers/step, default: 1

Please report issues at https://github.com/kmgowda/SBK

Just to check the SBK build issue the command

./gradlew check

Build only the SBK install binary

./gradlew installDist

executable binary will be available at : [SBK directory]/./build/install/sbk/bin/sbk

Running Performance benchmarking

The SBK can be executed to

- write/read a specific amount of events/records to/from the storage driver (device/cluster)

- write/read the events/records for the specified amount of time

SBK outputs the data written/read , average throughput and latency, minimum latency, maximum latency and the latency percentiles 5th , 10th, 20th, 25th, 30th, 40th, 50th, 60th, 75th, 80th, 90th, 92.5th, 95th, 97.5th, 99th, 99.25th, 99. 5th, 99.75th, 99.9th, 99.95th and 99.99th for every 5 seconds time interval as show below.

Pulsar Writing 1 writers, 0 readers, 1 max Writers, 0 max Readers, 0.0 write request MB, 0 write request records, 0.0 write request records/sec, 0.00 write request MB/sec, 0.0 read request MB, 0 read request records, 0.0 read request records/sec, 0.00 read request MB/sec, 5 seconds, 70.8 MB, 742765 records, 148523.3 records/sec, 14.16 MB/sec, 6.5 ms avg latency, 3 ms min latency, 45 ms max latency; 0 invalid latencies; Discarded Latencies: 0 lower, 0 higher; SLC-1: 1, SLC-2: 2; Latency Percentiles: 4 ms 5th, 5 ms 10th, 5 ms 20th, 5 ms 25th, 5 ms 30th, 5 ms 40th, 6 ms 50th, 7 ms 60th, 8 ms 70th, 8 ms 75th, 8 ms 80th, 9 ms 90th, 9 ms 92.5th, 10 ms 95th, 11 ms 97.5th, 12 ms 99th, 12 ms 99.25th, 14 ms 99.5th, 15 ms 99.75th, 25 ms 99.9th, 26 ms 99.95th, 28 ms 99.99th

Pulsar Writing 1 writers, 0 readers, 1 max Writers, 0 max Readers, 0.0 write request MB, 0 write request records, 0.0 write request records/sec, 0.00 write request MB/sec, 0.0 read request MB, 0 read request records, 0.0 read request records/sec, 0.00 read request MB/sec, 5 seconds, 92.4 MB, 968603 records, 193681.9 records/sec, 18.47 MB/sec, 5.1 ms avg latency, 1 ms min latency, 22 ms max latency; 0 invalid latencies; Discarded Latencies: 0 lower, 0 higher; SLC-1: 1, SLC-2: 1; Latency Percentiles: 4 ms 5th, 4 ms 10th, 4 ms 20th, 5 ms 25th, 5 ms 30th, 5 ms 40th, 5 ms 50th, 5 ms 60th, 5 ms 70th, 6 ms 75th, 6 ms 80th, 6 ms 90th, 6 ms 92.5th, 7 ms 95th, 7 ms 97.5th, 9 ms 99th, 9 ms 99.25th, 11 ms 99.5th, 14 ms 99.75th, 15 ms 99.9th, 16 ms 99.95th, 19 ms 99.99th

Pulsar Writing 1 writers, 0 readers, 1 max Writers, 0 max Readers, 0.0 write request MB, 0 write request records, 0.0 write request records/sec, 0.00 write request MB/sec, 0.0 read request MB, 0 read request records, 0.0 read request records/sec, 0.00 read request MB/sec, 5 seconds, 91.1 MB, 955197 records, 191001.2 records/sec, 18.22 MB/sec, 5.2 ms avg latency, 2 ms min latency, 45 ms max latency; 0 invalid latencies; Discarded Latencies: 0 lower, 0 higher; SLC-1: 1, SLC-2: 2; Latency Percentiles: 4 ms 5th, 4 ms 10th, 5 ms 20th, 5 ms 25th, 5 ms 30th, 5 ms 40th, 5 ms 50th, 5 ms 60th, 5 ms 70th, 5 ms 75th, 6 ms 80th, 6 ms 90th, 6 ms 92.5th, 7 ms 95th, 7 ms 97.5th, 9 ms 99th, 10 ms 99.25th, 11 ms 99.5th, 15 ms 99.75th, 32 ms 99.9th, 32 ms 99.95th, 32 ms 99.99th

Pulsar Writing 1 writers, 0 readers, 1 max Writers, 0 max Readers, 0.0 write request MB, 0 write request records, 0.0 write request records/sec, 0.00 write request MB/sec, 0.0 read request MB, 0 read request records, 0.0 read request records/sec, 0.00 read request MB/sec, 5 seconds, 91.3 MB, 957496 records, 191346.1 records/sec, 18.25 MB/sec, 5.2 ms avg latency, 2 ms min latency, 34 ms max latency; 0 invalid latencies; Discarded Latencies: 0 lower, 0 higher; SLC-1: 1, SLC-2: 2; Latency Percentiles: 4 ms 5th, 4 ms 10th, 4 ms 20th, 5 ms 25th, 5 ms 30th, 5 ms 40th, 5 ms 50th, 5 ms 60th, 5 ms 70th, 5 ms 75th, 6 ms 80th, 6 ms 90th, 6 ms 92.5th, 7 ms 95th, 8 ms 97.5th, 9 ms 99th, 12 ms 99.25th, 14 ms 99.5th, 15 ms 99.75th, 23 ms 99.9th, 23 ms 99.95th, 24 ms 99.99th

Pulsar Writing 1 writers, 0 readers, 1 max Writers, 0 max Readers, 0.0 write request MB, 0 write request records, 0.0 write request records/sec, 0.00 write request MB/sec, 0.0 read request MB, 0 read request records, 0.0 read request records/sec, 0.00 read request MB/sec, 5 seconds, 92.0 MB, 964692 records, 192745.7 records/sec, 18.38 MB/sec, 5.2 ms avg latency, 2 ms min latency, 28 ms max latency; 0 invalid latencies; Discarded Latencies: 0 lower, 0 higher; SLC-1: 1, SLC-2: 2; Latency Percentiles: 4 ms 5th, 4 ms 10th, 5 ms 20th, 5 ms 25th, 5 ms 30th, 5 ms 40th, 5 ms 50th, 5 ms 60th, 5 ms 70th, 5 ms 75th, 6 ms 80th, 6 ms 90th, 6 ms 92.5th, 7 ms 95th, 7 ms 97.5th, 9 ms 99th, 10 ms 99.25th, 11 ms 99.5th, 13 ms 99.75th, 24 ms 99.9th, 25 ms 99.95th, 25 ms 99.99th

Pulsar Writing 1 writers, 0 readers, 1 max Writers, 0 max Readers, 0.0 write request MB, 0 write request records, 0.0 write request records/sec, 0.00 write request MB/sec, 0.0 read request MB, 0 read request records, 0.0 read request records/sec, 0.00 read request MB/sec, 5 seconds, 87.2 MB, 914807 records, 182924.8 records/sec, 17.45 MB/sec, 5.4 ms avg latency, 1 ms min latency, 31 ms max latency; 0 invalid latencies; Discarded Latencies: 0 lower, 0 higher; SLC-1: 1, SLC-2: 2; Latency Percentiles: 4 ms 5th, 4 ms 10th, 5 ms 20th, 5 ms 25th, 5 ms 30th, 5 ms 40th, 5 ms 50th, 5 ms 60th, 6 ms 70th, 6 ms 75th, 6 ms 80th, 7 ms 90th, 7 ms 92.5th, 8 ms 95th, 9 ms 97.5th, 11 ms 99th, 12 ms 99.25th, 13 ms 99.5th, 18 ms 99.75th, 26 ms 99.9th, 26 ms 99.95th, 26 ms 99.99th

Pulsar Writing 1 writers, 0 readers, 1 max Writers, 0 max Readers, 0.0 write request MB, 0 write request records, 0.0 write request records/sec, 0.00 write request MB/sec, 0.0 read request MB, 0 read request records, 0.0 read request records/sec, 0.00 read request MB/sec, 5 seconds, 93.5 MB, 980681 records, 196097.0 records/sec, 18.70 MB/sec, 5.1 ms avg latency, 2 ms min latency, 47 ms max latency; 0 invalid latencies; Discarded Latencies: 0 lower, 0 higher; SLC-1: 1, SLC-2: 3; Latency Percentiles: 4 ms 5th, 4 ms 10th, 4 ms 20th, 5 ms 25th, 5 ms 30th, 5 ms 40th, 5 ms 50th, 5 ms 60th, 5 ms 70th, 5 ms 75th, 5 ms 80th, 6 ms 90th, 6 ms 92.5th, 6 ms 95th, 7 ms 97.5th, 8 ms 99th, 9 ms 99.25th, 10 ms 99.5th, 11 ms 99.75th, 42 ms 99.9th, 44 ms 99.95th, 44 ms 99.99th

Pulsar Writing 1 writers, 0 readers, 1 max Writers, 0 max Readers, 0.0 write request MB, 0 write request records, 0.0 write request records/sec, 0.00 write request MB/sec, 0.0 read request MB, 0 read request records, 0.0 read request records/sec, 0.00 read request MB/sec, 5 seconds, 95.0 MB, 995657 records, 199012.0 records/sec, 18.98 MB/sec, 5.0 ms avg latency, 1 ms min latency, 24 ms max latency; 0 invalid latencies; Discarded Latencies: 0 lower, 0 higher; SLC-1: 1, SLC-2: 2; Latency Percentiles: 4 ms 5th, 4 ms 10th, 4 ms 20th, 5 ms 25th, 5 ms 30th, 5 ms 40th, 5 ms 50th, 5 ms 60th, 5 ms 70th, 5 ms 75th, 5 ms 80th, 6 ms 90th, 6 ms 92.5th, 6 ms 95th, 7 ms 97.5th, 8 ms 99th, 8 ms 99.25th, 9 ms 99.5th, 13 ms 99.75th, 20 ms 99.9th, 20 ms 99.95th, 22 ms 99.99th

Pulsar Writing 1 writers, 0 readers, 1 max Writers, 0 max Readers, 0.0 write request MB, 0 write request records, 0.0 write request records/sec, 0.00 write request MB/sec, 0.0 read request MB, 0 read request records, 0.0 read request records/sec, 0.00 read request MB/sec, 5 seconds, 92.9 MB, 974343 records, 194790.7 records/sec, 18.58 MB/sec, 5.1 ms avg latency, 2 ms min latency, 31 ms max latency; 0 invalid latencies; Discarded Latencies: 0 lower, 0 higher; SLC-1: 1, SLC-2: 2; Latency Percentiles: 4 ms 5th, 4 ms 10th, 4 ms 20th, 4 ms 25th, 5 ms 30th, 5 ms 40th, 5 ms 50th, 5 ms 60th, 5 ms 70th, 5 ms 75th, 6 ms 80th, 6 ms 90th, 6 ms 92.5th, 7 ms 95th, 8 ms 97.5th, 9 ms 99th, 11 ms 99.25th, 12 ms 99.5th, 14 ms 99.75th, 23 ms 99.9th, 25 ms 99.95th, 26 ms 99.99th

Pulsar Writing 1 writers, 0 readers, 1 max Writers, 0 max Readers, 0.0 write request MB, 0 write request records, 0.0 write request records/sec, 0.00 write request MB/sec, 0.0 read request MB, 0 read request records, 0.0 read request records/sec, 0.00 read request MB/sec, 5 seconds, 94.9 MB, 995283 records, 198857.7 records/sec, 18.96 MB/sec, 5.0 ms avg latency, 2 ms min latency, 21 ms max latency; 0 invalid latencies; Discarded Latencies: 0 lower, 0 higher; SLC-1: 1, SLC-2: 1; Latency Percentiles: 4 ms 5th, 4 ms 10th, 4 ms 20th, 4 ms 25th, 5 ms 30th, 5 ms 40th, 5 ms 50th, 5 ms 60th, 5 ms 70th, 5 ms 75th, 5 ms 80th, 6 ms 90th, 6 ms 92.5th, 6 ms 95th, 7 ms 97.5th, 8 ms 99th, 9 ms 99.25th, 10 ms 99.5th, 12 ms 99.75th, 15 ms 99.9th, 17 ms 99.95th, 17 ms 99.99th

Pulsar Writing 1 writers, 0 readers, 1 max Writers, 0 max Readers, 0.0 write request MB, 0 write request records, 0.0 write request records/sec, 0.00 write request MB/sec, 0.0 read request MB, 0 read request records, 0.0 read request records/sec, 0.00 read request MB/sec, 5 seconds, 96.6 MB, 1012647 records, 202488.9 records/sec, 19.31 MB/sec, 4.9 ms avg latency, 2 ms min latency, 20 ms max latency; 0 invalid latencies; Discarded Latencies: 0 lower, 0 higher; SLC-1: 1, SLC-2: 1; Latency Percentiles: 4 ms 5th, 4 ms 10th, 4 ms 20th, 4 ms 25th, 4 ms 30th, 5 ms 40th, 5 ms 50th, 5 ms 60th, 5 ms 70th, 5 ms 75th, 5 ms 80th, 6 ms 90th, 6 ms 92.5th, 6 ms 95th, 7 ms 97.5th, 8 ms 99th, 8 ms 99.25th, 9 ms 99.5th, 12 ms 99.75th, 13 ms 99.9th, 16 ms 99.95th, 16 ms 99.99th

2022-08-30 18:58:50 INFO [topic-k-1] [standalone-0-0] Pending messages: 1 --- Publish throughput: 191191.96 msg/s --- 145.87 Mbit/s --- Latency: med: 8.587 ms - 95pct: 11.525 ms - 99pct: 15.027 ms - 99.9pct: 20.051 ms - max: 47.916 ms --- Ack received rate: 191175.29 ack/s --- Failed messages: 0

Pulsar Writing 1 writers, 0 readers, 1 max Writers, 0 max Readers, 0.0 write request MB, 0 write request records, 0.0 write request records/sec, 0.00 write request MB/sec, 0.0 read request MB, 0 read request records, 0.0 read request records/sec, 0.00 read request MB/sec, 4 seconds, 98.3 MB, 1030554 records, 207104.9 records/sec, 19.75 MB/sec, 4.8 ms avg latency, 1 ms min latency, 19 ms max latency; 0 invalid latencies; Discarded Latencies: 0 lower, 0 higher; SLC-1: 1, SLC-2: 1; Latency Percentiles: 4 ms 5th, 4 ms 10th, 4 ms 20th, 4 ms 25th, 4 ms 30th, 5 ms 40th, 5 ms 50th, 5 ms 60th, 5 ms 70th, 5 ms 75th, 5 ms 80th, 6 ms 90th, 6 ms 92.5th, 6 ms 95th, 6 ms 97.5th, 8 ms 99th, 8 ms 99.25th, 9 ms 99.5th, 10 ms 99.75th, 14 ms 99.9th, 14 ms 99.95th, 16 ms 99.99th

Total Pulsar Writing 1 writers, 0 readers, 1 max Writers, 0 max Readers, 0.0 write request MB, 0 write request records, 0.0 write request records/sec, 0.00 write request MB/sec, 0.0 read request MB, 0 read request records, 0.0 read request records/sec, 0.00 read request MB/sec, 60 seconds, 1096.0 MB, 11492725 records, 191542.2 records/sec, 18.27 MB/sec, 5.2 ms avg latency, 1 ms min latency, 47 ms max latency; 0 invalid latencies; Discarded Latencies: 0 lower, 0 higher; SLC-1: 1, SLC-2: 2; Latency Percentiles: 4 ms 5th, 4 ms 10th, 4 ms 20th, 5 ms 25th, 5 ms 30th, 5 ms 40th, 5 ms 50th, 5 ms 60th, 5 ms 70th, 5 ms 75th, 6 ms 80th, 6 ms 90th, 7 ms 92.5th, 7 ms 95th, 8 ms 97.5th, 10 ms 99th, 11 ms 99.25th, 12 ms 99.5th, 14 ms 99.75th, 18 ms 99.9th, 24 ms 99.95th, 32 ms 99.99th

At the end of the benchmarking session, SBK outputs the total data written/read , average throughput and latency , maximum latency and the latency percentiles 10th, 20th, 25th, 30th, 40th, 50th, 60th, 75th, 80th, 90th, 92.5th, 95th, 97.5th, 99th, 99.25th, 99.5th, 99.75th, 99.9th, 99.95th and 99.99th for the complete data records written/read. An example final output is show as below:

Total Pulsar Writing 1 writers, 0 readers, 1 max Writers, 0 max Readers, 0.0 write request MB, 0 write request records, 0.0 write request records/sec, 0.00 write request MB/sec, 0.0 read request MB, 0 read request records, 0.0 read request records/sec, 0.00 read request MB/sec, 60 seconds, 1096.0 MB, 11492725 records, 191542.2 records/sec, 18.27 MB/sec, 5.2 ms avg latency, 1 ms min latency, 47 ms max latency; 0 invalid latencies; Discarded Latencies: 0 lower, 0 higher; SLC-1: 1, SLC-2: 2; Latency Percentiles: 4 ms 5th, 4 ms 10th, 4 ms 20th, 5 ms 25th, 5 ms 30th, 5 ms 40th, 5 ms 50th, 5 ms 60th, 5 ms 70th, 5 ms 75th, 6 ms 80th, 6 ms 90th, 7 ms 92.5th, 7 ms 95th, 8 ms 97.5th, 10 ms 99th, 11 ms 99.25th, 12 ms 99.5th, 14 ms 99.75th, 18 ms 99.9th, 24 ms 99.95th, 32 ms 99.99th

Sliding Latency Coverage (SLC) factors

The SBK yields latency data points in the form of quartiles and percentiles. For the performance analysis, these quartiles and percentile latencies can be combined into two factors : Sliding Latency Coverage 1 (SLC 1) and Sliding Latency Coverage 2 (SLC 2).

The SLC1 indicates the coefficient of dispersion from lower latency percentile to median percentile. This indicates the range between all lower latencies percentiles to median latency and also dispersion from all latency values which are below median latency. The SLC2 indicates the coefficient of dispersion from median latency percentile and all other percentile values to the last (maximum) percentile (99.99th percentile). If you are comparing two or more storage systems which are having similar / approximate median latency percentiles then SLC2 gives which storage system is doing better. Lower SLC2 factor means higher the performance of the system. If you are observing too many variations of SLC 2 factor that means you have an opportunity to improve the stability of the storage system too.

Performance results to CSV file

you can use option “-csvfile” to specify the csv file to log all the performance results. Further you can use sbk charts for generating xlsx files with graphs.

Grafana Dashboards of SBK

When you run the SBK, by default it starts the http server and all the output benchmark data is directed to the default port number: 9718 and metrics context. If you want to change the port number and context, you can use the command line argument -context to change the same. you have to run the prometheus monitoring system (server [default port number is 9090] cum client) which pulls/fetches the benchmark data from the local/remote http server. If you want to include additional SBK nodes/instances to fetch the performance data or from port number other than 9718, you need to extend or update targets.json In case, if you are fetching metrics/benchmark data from remote http server , or from the context other than metrics then you need to change the default prometheus server configuration too. Run the grafana server (cum a client) to fetch the benchmark data from prometheus. For example, if you are running a local grafana server then by default it fetches the data from the prometheus server at the local port 9090. You can access the local grafana server at localhost:3000 in your browser using admin/admin as default username / password. You can import the grafana dashboards to fetch the SBK benchmark data of the existing supported storage drivers from grafana dashboards.

The sample output of Standalone Pulsar benchmark data with grafana is below

Port conflicts between storage servers and grafana/prometheus

- If you are running Pravega server in standalone/local mode or if you are running SBK in the same system in which Pravega controller is also running, then Prometheus port 9090 conflicts with the Pravega controller. So, either you change the Pravega controller port number or change the Prometheus port number in the Prometheus targets file before deploying the prometheus.

- If you find that using the local port 9718 conflicts with a storage server or any other application. Then, you can change the SBK’s http port using -metrics option, and you need change the Prometheus targets.json too

SBK with JMX exporter and Grafana

The SBK can start the java agent to export the JVM metrics to Grafana via Prometheus. you just have build with parameter -PjmxExport=true while building SBK.

the command is below

./gradlew installDist -PjmxExport=true

All the SBK JVM metrics will be available at http://localhost:8718/metrics The network port 8718 to used to expose the metrics. use SBK-JMX grafana dashboard to analyse the SBK-JVM metrics.

Distributed SBK

SBK can be deployed in a distributed clusters using SBM, SBK-GEM and SBK-GEM-YAL.

SBK Docker Containers

you can build the sbk docker image using ‘docker’ command as follows

docker build -f ./dockers/sbk <root directory> --tag <tag name>

example docker command is

docker build -f ./dockers/sbk ./ --tag sbk

you can run the docker image too, For example

docker run -p 127.0.0.1:9718:9718/tcp sbk -class rabbitmq -broker 192.168.0.192 -topic kmg-topic-11 -writers 5

-readers 1 -size 100 -seconds 60

- Note that the option -p 127.0.0.1:9718:9718/tcp redirects the 9718 port to local port to fetch the performance metric data for Prometheus.

- Avoid using the –network host option , because this option overrides the port redirection.

Docker images for single sbk driver The sbk docker image is always bigger size and its size grows whenever new driver is added; so, you can build individual sbk driver docker image too ; the individual docker images are available at dockers folder. you can pick those file to build the docker image, for example to build the docker image for sbk file driver, you can use the command

docker build -f ./dockers/sbk-file ./ --tag sbk-file

SBK Docker hub

The SBK Docker images are available at SBK Docker with the latest tags

The SBK docker image pull command is

docker pull kmgowda/sbk

you can straightaway run the docker image too, For example

docker run -p 127.0.0.1:9718:9718/tcp kmgowda/sbk:latest -class rabbitmq -broker 192.168.0.192 -topic kmg-topic-11 -writers 5 -readers 1 -size 100 -seconds 60

- Note that the option -p 127.0.0.1:9718:9718/tcp redirects the 9718 port to local port to fetch the performance metric data for Prometheus.

- Avoid using the –network host option , because this option overrides the port redirection.

SBK Docker Compose

The SBK docker compose consists of SBK docker image, Grafana and prometheus docker images. The grafana image contains the dashboards which can be directly deployed for the performance analytics.

As an example, just follow the below steps to see the performance graphs

-

In the SBK directory build the ‘SBK’ service of the docker compose file as follows.

<SBK dir>% docker compose -f ./docker-compose-sbk-grafana.yml build -

Run the ‘SBK’ service as follows.

<SBK dir>% docker compose run sbk -class concurrentq -writers 1 -readers 5 -size 1000 -seconds 120 - login to grafana local host port 3000 with username admin and password sbk

- go to dashboard menu and pick the dashboard of the storage device on which you are running the performance benchmarking. in the above example, you can choose the Concurrent Queue dashboard.

- The SBK docker compose runs the SBK image as docker container. In case, if you are running SBK as an application, and you want to see the SBK performance graphs using Grafana, then use Grafana Docker compose

SBK Kubernetes

check these SBK Kubernetes Deployments samples for details on SBK as kubernetes pod. If you want to run the Grafana and prometheus as Kubernetes pods, then use Grafana Kubernetes deployment

SBK Metrics Network Ports

| Network Port | Description |

|---|---|

| 9717 | SBM GRPC server Port |

| 9718 | SBK performance metrics to Prometheus |

| 9719 | SBM performance metrics to Prometheus |

| 8718 | SBK JVM/JMX metrics to Prometheus |

| 8719 | SBM JVM/JMX metrics to Prometheus |

SBK Execution Modes

The SBK can be executed in the following modes:

1. Burst Mode (Max rate mode)

2. Throughput Mode

3. Rate limiter Mode

4. End to End Latency Mode

1 - Burst Mode / Max Rate Mode

In this mode, the SBK pushes/pulls the messages to/from the storage client(device/driver) as much as possible. This mode is used to find the maximum and throughput that can be obtained from the storage device or storage cluster (server). This mode can be used for both writers and readers. By default, the SBK runs in Burst mode.

For example: The Burst mode for pulsar single writer as follows

<SBK directory>./build/distributions/sbk/bin/sbk -class Pulsar -admin http://localhost:8080 -broker tcp://localhost:6650 -topic topic-k-223 -partitions 1 -writers 1 -size 1000 -seconds 60 -throughput -1

The -throughput -1 indicates the burst mode. Note that, you don't supply the parameter -throughput then also its burst mode.

This test will be executed for 60 seconds because option -seconds 60 is used.

This test tries to write and read events of size 1000 bytes to/from the topic 'topic-k-223'.

The option '-broker tcp://localhost:6650' specifies the Pulsar broker IP address and port number for write operations.

The option '-admin http://localhost:8080' specifies the Pulsar admin IP and port number for topic creation and deletion.

Note that -producers 1 indicates 1 producer/writers.

in the case you want to write/read the certain number of records.events use the -records option without -seconds option as follows

<SBK directory>/build/distributions/sbk/bin/sbk -class Pulsar -admin http://localhost:8080 -broker tcp://localhost:6650 -topic topic-k-223 -partitions 1 -writers 1 -size 1000 -records 100000 -throughput -1

-records <number> indicates that total <number> of records to write/read

2 - Throughput Mode

In this mode, the SBK pushes/pulls/from the messages to the storage client(device/driver) with specified approximate maximum throughput in terms of Mega Bytes/second (MB/s). This mode is used to find the least latency that can be obtained from the storage device or storage cluster (server) for given throughput.

For example: The throughput mode for pulsar 5 writers as follows

<SBK directory> ./build/distributions/sbk/bin/sbk -class Pulsar -admin http://localhost:8080 -broker tcp://localhost:6650 -topic topic-k-223 -partitions 1 -writers 5 -size 1000 -seconds 120 -throughput 10

The -throughput <positive number> indicates the Throughput mode.

This test will be executed with approximate max throughput of 10MB/sec.

This test will be executed for 120 seconds (2 minutes) because option -seconds 120 is used.

This test tries to write and read events of size 1000 bytes to/from the topic 'topic-k-223' of 1 partition.

If the topic 'topic-k-223' is not existing , then it will be created with 1 segment.

if the steam is already existing then it will be deleted and recreated with 1 segment.

Note that -writers 5 indicates 5 producers/writers .

in the case you want to write/read the certain number of events use the -records option without -seconds option as follows

<SBK directory>./build/distributions/sbk/bin/sbk -class Pulsar -admin http://localhost:8080 -broker tcp://localhost:6650 -topic topic-k-223 -partitions 1 -writers 5 -size 1000 -records 1000000 -throughput 10

-records 1000000 indicates that total 1000000 (1 million) of events will be written at the throughput speed of 10MB/sec

3 - Rate limiter Mode

This mode is another form of controlling writers/readers throughput by limiting the number of records per second. In this mode, the SBK pushes/pulls the messages to/from the storage client (device/driver) with specified approximate maximum records per sec. This mode is used to find the least latency that can be obtained from the storage device or storage cluster (server) for events rate.

For example: The Rate limiter Mode for pulsar 5 writers as follows

<SBK directory>./build/distributions/sbk/bin/sbk -class Pulsar -admin http://localhost:8080 -broke

r tcp://localhost:6650 -topic topic-k-225 -partitions 10 -writers 5 -size 100 -seconds 60 -records 1000

The -records <records number> (1000) specifies the records per second to write.

Note that the option "-throughput" SHOULD NOT be supplied for this Rate limiter Mode.

This test will be executed with approximately 1000 events per second by 5 writers.

The topic "topic-k-225" with 10 partitions is created to run this test.

This test will be executed for 60seconds (1 minutes) because option -seconds 60 is used.

Note that in this mode, there is 'NO total number of events' to specify hence the user must supply the time to run using the -seconds option.

4 - End to End Latency Mode

In this mode, the SBK writes and reads the messages to the storage client (device/driver) and records the end to end latency. End to end latency means the time duration between the beginning of the writing event/record to stream, and the time after reading the event/record. in this mode user must specify both the number of writers and readers. The -throughput option (Throughput mode) or -records (late limiter) can be used to limit the writer’s throughput or records rate.

For example: The End to End latency of between single writer and single reader of pulsar is as follows:

<SBK directory>./build/distributions/sbk/bin/sbk -class Pulsar -admin http://localhost:8080 -broker tcp://localhost:6650 -topic topic-km-1 -partitions 1 -writers 1 -readers 1 -size 1000 -throughput -1 -seconds 60

The user should specify both writers and readers count for write to read or End to End latency mode.

The -throughput -1 specifies the writer tries to write the events at the maximum possible speed.

Contributing to SBK

- All submissions must be adhering to Apache licence

All submissions to the master are done through pull requests. If you’d like to make a change:

- Create a new Github issue (SBK issues) describing the problem / feature.

- Fork a branch.

- Make your changes.

- you can refer (Oracle Java Coding Style) for coding style; however, Running the Gradle build helps you to fix the Coding style issues too.

- Verify all changes are working and Gradle build checkstyle is good.

- Submit a pull request with Issue number, Description and your Sign-off.

Make sure that you update the issue with all details of testing you have done; it will be helpful for me to review and merge.

Another important point to consider is how to keep up with changes against the base branch (the one your pull request is comparing against). Let’s assume that the base branch is master. To make sure that your changes reflect the recent commits, I recommend that you rebase frequently. The command I suggest you use is:

git pull --rebase upstream master

git push --force origin <pr-branch-name>

in the above, I’m assuming that:

- upstream is https://github.com/kmgowda/SBK.git

- origin is ‘your github account/SBK.git’

The rebase might introduce conflicts, so you better do it frequently to avoid outrageous sessions of conflict resolving.

Lombok

SBK uses [Lombok] for code optimizations; I suggest the same for all the contributors too. If you use an IDE you’ll need to install a plugin to make the IDE understand it. Using IntelliJ is recommended.

To import the source into IntelliJ:

- Import the project directory into IntelliJ IDE. It will automatically detect the gradle project and import things correctly.

- Enable

Annotation Processingby going toBuild, Execution, Deployment->Compiler>Annotation Processorsand checking ‘Enable annotation processing’. - Install the

Lombok Plugin. This can be found inPreferences->Plugins. Restart your IDE. - SBK should now compile properly.

For eclipse, you can generate eclipse project files by running ./gradlew eclipse.

Add your driver to SBK

🚀 Quick Start: Add Your Driver Using Gradle Template

SBK provides an automated template system to generate new storage drivers instantly. This is the recommended approach for AI coding agents and developers.

Step 1: Generate Your Driver Template

# Generate your driver template in seconds!

./gradlew addDriver -Pdriver="your-storage-name"

What this command does:

- Creates a new driver subproject under

drivers/your-storage-name/ - Generates all required Java files with proper package structure

- Automatically adds your driver to SBK’s build configuration

- Sets up template classes with clear implementation guidance

Generated Files:

drivers/your-storage-name/

├── build.gradle # Driver-specific dependencies

└── src/main/java/io/sbk/driver/YourStorageName/

├── YourStorageName.java # Main storage class (7 methods to implement)

├── YourStorageNameConfig.java # Configuration handling

├── YourStorageNameWriter.java # Write operations

└── YourStorageNameReader.java # Read operations

Step 2: Add Driver Dependencies

Edit drivers/your-storage-name/build.gradle to add your storage system’s dependencies:

dependencies {

api project(":sbk-api")

// Add your storage driver dependencies here

// Example for a database:

// implementation 'org.postgresql:postgresql:42.7.3'

// Example for a message queue:

// implementation 'org.apache.pulsar:pulsar-client:3.1.0'

}

Step 3: Implement the Storage Interface

Your main storage class (YourStorageName.java) needs to implement 7 simple methods:

public class YourStorageName implements Storage<byte[]> {

// 1. Add command-line parameters for your driver

public void addArgs(InputOptions params) {

// Add driver-specific parameters like:

// params.addOption("host", true, "Database host");

// params.addOption("port", true, "Database port");

}

// 2. Parse the command-line parameters

public void parseArgs(ParameterOptions params) {

// Extract and validate parameters:

// config.host = params.getOptionValue("host", "localhost");

// config.port = Integer.parseInt(params.getOptionValue("port", "5432"));

}

// 3. Initialize connection to your storage system

public void openStorage(ParameterOptions params) throws IOException {

// Establish connection, create session, etc.

}

// 4. Clean up resources

public void closeStorage(ParameterOptions params) throws IOException {

// Close connections, cleanup resources

}

// 5. Create writer instance (called for each writer thread)

public DataWriter<byte[]> createWriter(int id, ParameterOptions params) {

return new YourStorageNameWriter(id, params, config);

}

// 6. Create reader instance (called for each reader thread)

public DataReader<byte[]> createReader(int id, ParameterOptions params) {

return new YourStorageNameReader(id, params, config);

}

// 7. Specify data type (byte[] is default, change if needed)

public DataType<byte[]> getDataType() {

return new ByteArray(); // Use String(), CustomType(), etc. if needed

}

}

Step 4: Implement Writer and Reader Classes

Writer Class (YourStorageNameWriter.java):

public class YourStorageNameWriter extends Writer<byte[]> {

// Write data asynchronously (required)

public void writeAsync(byte[] data) throws IOException {

// Write data to your storage system

// Measure latency automatically by SBK

}

// Flush/sync data (optional)

public void sync() throws IOException {

// Ensure data is persisted

}

// Close writer (required)

public void close() throws IOException {

// Cleanup writer resources

}

}

Reader Class (YourStorageNameReader.java):

public class YourStorageNameReader extends Reader<byte[]> {

// Read data synchronously (required)

public void read() throws IOException {

// Read data from your storage system

// SBK automatically measures read latency

}

// Close reader (required)

public void close() throws IOException {

// Cleanup reader resources

}

}

Step 5: Build and Test

# Build SBK with your new driver

./gradlew build

# Extract the distribution

tar -xvf ./build/distributions/sbk-*.tar -C ./build/distributions/.

# Test your driver

./build/distributions/sbk-*/bin/sbk -class YourStorageName -help

🎯 Implementation Patterns by Storage Type

For Databases (SQL/NoSQL):

- Use connection pools in

openStorage() - Implement batch writes in

writeAsync()for better performance - Handle connection failures gracefully

For Message Queues:

- Create producers/consumers in writer/reader constructors

- Use async APIs to avoid blocking

- Handle acknowledgments properly

For File Systems:

- Use buffered I/O for better performance

- Implement proper file handle management

- Consider memory-mapped files for high throughput

For Cloud Storage:

- Handle network timeouts and retries

- Use multipart uploads for large files

- Implement proper authentication

📋 Complete Implementation Checklist

- Run

./gradlew addDriver -Pdriver="your-name" - Add dependencies in

build.gradle - Implement 7 methods in main storage class

- Implement writer class methods

- Implement reader class methods

- Add error handling and validation

- Test with

./gradlew build - Verify driver appears in help output

- Run basic benchmark tests

🔧 Advanced Features

Custom Data Types:

If you need to work with data other than byte[], override getDataType():

public DataType<String> getDataType() {

return new String(); // For text data

}

Configuration Management:

Use the generated Config class to handle complex configurations:

// In your main class

private YourStorageNameConfig config;

// Load configuration in addArgs()

config = mapper.readValue(

Objects.requireNonNull(getClass().getClassLoader().getResourceAsStream("YourStorageName.properties")),

YourStorageNameConfig.class);

Async vs Sync Operations:

- Use

writeAsync()for non-blocking operations - Implement

sync()for explicit flush operations - SBK automatically handles timing and latency measurement

Async Reader Class (YourStorageNameReader.java):

public class YourStorageNameReader extends AsyncReader<byte[]> {

// Read data synchronously (required)

public CompletableFuture<T> readAsync(int size) throws IOException {

// Read data from your storage system

// SBK automatically measures read latency

}

// Close reader (required)

public void close() throws IOException {

// Cleanup reader resources

}

}

🐛 Common Pitfalls to Avoid

- Blocking Operations: Never block in

writeAsync()orreadAsync() - Resource Leaks: Always implement proper cleanup in

close()methods - Thread Safety: Each writer/reader instance is used by a single thread

- Error Handling: Wrap storage-specific exceptions in

IOException - Configuration: Validate all parameters in

parseArgs()before using

📚 Example Drivers for Reference

- Simple File System:

drivers/file - Database (PostgreSQL):

drivers/postgresql - Message Queue (Kafka):

drivers/kafka - Cloud Storage (S3):

drivers/minio

Review these examples to understand best practices for your storage type.

📖 Manual Driver Setup (Alternative Method)

-

Create the gradle subproject preferable with the name driver-<your driver(storage device) name>.

- See the Example:[Pulsar driver]

-

Create the package io.sbk.< your driver name>

- See the Example: [Pulsar driver package]

-

Add the java library of the driver via MavenCentral or Github packages in the dependencies of the driver build. gradle file. Add packages

-

In your driver package you have to implement the Interface: [Storage]

-

See the Example: [Pulsar class]

-

you have to implement the following methods of Benchmark Interface:

a). Add the Additional parameters (Command line Parameters) for your driver :[addArgs]

- The default command line parameters are listed in the help output here : [Building SBK]

b). Parse your driver specific parameters: [parseArgs]

c). Open the storage: [openStorage]

d). Close the storage:[closeStorage]

e). Create a single writer instance:[createWriter]

- Create Writer will be called multiple times by SBK in case of Multi writers are specified in the command line.

f). Create a single Reader instance:[createReader]

- Create Reader will be called multiple times by SBK in case of Multi readers are specified in the command line.

g). Get the Data Type :[getDataType]

- In case your data type is byte[] (Byte Array), No need to override this method. see the example: [Pulsar class]

- If your Benchmark, Reader and Writer classes operates on different data type such as String or custom data type, then you have to override this default implementation.

-

-

Implement the Writer Interface: [Writer]

-

See the Example: [Pulsar Writer]

-

you have to implement the following methods of Writer class:

a). Writer Data [Async or Sync]: [writeAsync]

b). Flush the data: [sync]

c). Close the Writer: [close]

d). In case , if you want to have your own recordWrite implementation to write data and record the start and end time, then you can override: [recordWrite]

-

-

Implement the Reader Interface: [Reader]

-

you have to implement the following methods of Reader class:

i). Read Data

- for synchronous reads: [read]

- Example: [Pulsar Reader]

- for Asynchronous reads: [AsyncRead]

- Example: [File Async Reader]

- for call-back reads extend the abstract class: [Abstract callback Reader]

- Example: [RabbitMQ Reader]

ii). Close the Reader: [close ]

- for synchronous reads: [read]

-

-

Add the Gradle dependency [ compile project(“:sbk-api”)] to your sub-project (driver)

- see the Example:[Pulsar Gradle Build]

-

Add your subproject to the main gradle as dependency.

-

see the Example: [SBK Gradle]

-

make sure that gradle settings file: [SBK Gradle Settings] has your Storage driver subproject name

-

-

make sure that you driver is added in build-drivers.gradle and [settings-drivers.gradle] (settings-driver.gradle) files

-

make sure that your packages are allowed for compilation by adding an entry in the file checkstyle import-control file

-

That’s all ; Now, Build the SBK included your driver with the command:

./gradlew build

untar the SBK to local folder

tar -xvf ./build/distributions/sbk.tar -C ./build/distributions/.

- To invoke the benchmarking of the driver you have to issue the parameters “-class < your driver name>”

💡 Recommendation: Use the automated template system (

./gradlew addDriver) for faster, error-free setup.

Use SBK git hub packages

Instead of using entire SBK framework, if you just want to use the SBK framework API packages to measure the performance benchmarking of your storage device/software, then follow the below simple and easy steps.

-

Add the SBK git hub package repository and dependency in gradle build file of your project as follows

repositories { mavenCentral() maven { name = "GitHubPackages" url = uri("https://maven.pkg.github.com/kmgowda/SBK") /* credentials { username = project.findProperty("github.user") ?: System.getenv("GITHUB_USERNAME") password = project.findProperty("github.token") ?: System.getenv("GITHUB_TOKEN") } */ credentials { username = "sbk-public" password = "\u0067hp_FBqmGRV6KLTcFjwnDTvozvlhs3VNja4F67B5" } } } dependencies { implementation "io.github.kmgowda.sbk:sbk-api:8.0" }few points to remember here

- you need to authenticate with your git hub username (GITHUB_USERNAME) and git hub token (GITHUB_TOKEN)

- mavenCentral() repository is required to fetch the SBK’s dependencies too.

- check this example: File system benchmarking git hub build

-

Extend the storage interface Storage by following steps 1 to 5 described in Add your storage driver

- check this example: File system benchmarking

-

Create a Main method to supply your storage class object to SBK to run/conduct the performance benchmarking

public static void main(final String[] args) { Storage device = new <your storage class, extending the Storage interface>; try { //Start the File system benchmarking here Sbk.run(args /* Command line Arguments, use '-class ' option specify the you storage class name */ , packageNmae /* the name of the package where your storage device object exists */ , null /* Name of the your performance benchmarking application, by default , storage class name will be used */ , null /* Logger, if you don't have your own logger, then prometheus logger will be used by default */ ); } catch (ParseException | IllegalArgumentException | IOException | InterruptedException | ExecutionException | TimeoutException ex) { ex.printStackTrace(); System.exit(1); } System.exit(0); }- check this example: Start File system benchmarking

-

That’s all! Run your main method (your java application ) with “-help” to see the benchmarking options.

Use SBK from JitPack

The SBK API package is available in JitPack Repository too. To use the SBK-API package from Jitpack, follow the below simple and easy steps

-

Add the SBK git hub package repository and dependency in gradle build file of your project as follows

repositories { mavenCentral() maven { url 'https://jitpack.io' } } dependencies { implementation "com.github.kmgowda.SBK:sbk-api:8.0" }few points to remember here

- mavenCentral() repository is required to fetch the SBK’s dependencies too.

- check this example: File system benchmarking jit pack build

- Extend the storage interface Storage by following steps 1 to 5 described in Add your storage driver

- check this example: File system benchmarking

-

Create a Main method to supply your storage class object to SBK to run/conduct the performance benchmarking

public static void main(final String[] args) { Storage device = new <your storage class, extending the Storage interface>; try { //Start the File system benchmarking here Sbk.run(args /* Command line Arguments, use '-class ' option specify the you storage class name */ , packageNmae /* the name of the package where your storage device object exists */ , null /* Name of the your performance benchmarking application, by default , storage class name will be used */ , null /* Logger, if you don't have your own logger, then prometheus logger will be used by default */ ); } catch (ParseException | IllegalArgumentException | IOException | InterruptedException | ExecutionException | TimeoutException ex) { ex.printStackTrace(); System.exit(1); } System.exit(0); }- check this example: Start File system benchmarking

- That’s all! Run your main method (your java application ) with “-help” to see the benchmarking options.

Use SBK from Maven Central

The SBK APIs Package is available at maven central too. to use the sbk-api package, follow below steps

-

Add the SBK git hub package repository and dependency in gradle build file of your project as follows

repositories { mavenCentral() } dependencies { implementation "io.github.kmgowda.sbk:sbk-api:8.0" }few points to remember here

- mavenCentral() repository is required to fetch the SBK APIs package and its dependencies.

- check this example: File system benchmarking maven build

- Extend the storage interface Storage by following steps 1 to 5 described in Add your storage driver

- check this example: File system benchmarking

-

Create a Main method to supply your storage class object to SBK to run/conduct the performance benchmarking

public static void main(final String[] args) { Storage device = new <your storage class, extending the Storage interface>; try { //Start the File system benchmarking here Sbk.run(args /* Command line Arguments, use '-class ' option specify the you storage class name */ , packageNmae /* the name of the package where your storage device object exists */ , null /* Name of the your performance benchmarking application, by default , storage class name will be used */ , null /* Logger, if you don't have your own logger, then prometheus logger will be used by default */ ); } catch (ParseException | IllegalArgumentException | IOException | InterruptedException | ExecutionException | TimeoutException ex) { ex.printStackTrace(); System.exit(1); } System.exit(0); }- check this example: Start File system benchmarking

- That’s all! Run your main method (your java application ) with “-help” to see the benchmarking options.

SBK Charts

you can log the performance results to CSV file using option ‘–csvfile’. if you have SBK results in one or multiple CSV files, then you can use python application ‘sbk-charts’ to compare the SBK benchmarking results plot the graphs into an Excel sheet. refer SBK Charts for further details.

SBK Publications

- The SBK is inspired from the Pravega benchmark tool, refer Pravega benchmark tool

- The SBK uses the multiple concurrent queues for fast performance benchmarking, refer Design of SBK

- The SBK implements SLC(Sliding Latency Coverage) factors to summarize the latency percentiles, refer SLC

- The SBK uses the SBP: Storage Benchmark Protocol and SBM: Storage Benchmark Monitor for distributed performance benchmarking, refer SBP

🤝 Join Our Community

📞 Get Connected

- GitHub Issues: Report bugs or request features

- Pull Requests: Contribute code

- Discussions: Ask questions and share ideas

📈 Impact Metrics

- 50+ Storage Drivers: From databases to message queues

- Used by Major Companies: Production deployments worldwide

- Regular Releases: Continuous improvements and features

SBK: Where Performance Meets Precision ⚡